Frequently Asked Questions |

This topic addresses some common questions that you may have when working with DME Component Libraries.

I have several cores on my machine, will DGL use these? How do I configure them?

DME Component Libraries is designed to scale with your application. To that end, the libraries will use all logical processors on a single machine by default. Certain areas of DME Component Libraries, such as the Spatial Analysis Library, benefit greatly by using multiple logical processors in their calculations. You can control the number of threads DME Component Libraries uses by configuring the ThreadingPolicy. See the Multithreading topic in the Programmer's Guide for more details.

How can I read files that STK uses in DME Component Libraries?

The Data Interoperability topic in the Programmer's Guide describes how to read in or write out files used by STK.

The results I'm seeing don't look correct - what units are they in?

DME Component Libraries uses the SI system of units, unless otherwise stated in the documentation. However, many methods return units consistent with the units of the arguments passed in. See the Units topic in the Programmer's Guide for more details.

How do I get the velocity (or other higher derivative) from my object?

Methods in DME Component Libraries that return a Motion1<T> or Motion2<T, TDerivative> return the position of an object as the Value property. The first and second derivatives can be accessed through the FirstDerivative (get) and SecondDerivative (get) properties. Further derivatives can be accessed using the get method.

Methods that return the motion types (for example, evaluate methods) typically take an order parameter that specifies what order of result you'd like DME Component Libraries to try to calculate. Note that DME Component Libraries may not be able to calculate the order you request, in which case it will return the highest order that can be calculated. See the Motion1<T> and Motion2<T, TDerivative> topic in the Programmer's Guide for more details.

How do I add capabilities to a Platform in DME Component Libraries?

Extensions allow you to configure a Platform to be anything you like. Platforms allow the addition of extensions which provide specialized capabilities to the platform. There are many extensions included with DME Component Libraries, and you can write your own extensions as well.

All extensions are derived from ObjectExtension (which implements IServiceProvider ) and can be added to objects that derive from ExtensibleObject, as the Platform object does. You can then access the specialized functionality of an extension on a Platform through the service provider mechanism. Extensions typically implement one or more services which provide the specialized functions for objects. When you have a Platform object, you can use helper methods (from the ServiceHelper class) to return a service from the Platform. If the service is not available, a ServiceNotAvailableException will be thrown. Extensions, combined with services, provide an abstract way to access different functionality for Platforms or other ExtensibleObjects within DME Component Libraries.

See the Programmer's Guide topics on Platforms and Service Providers for more details.

How do I model whiskbroom and pushbroom sensors?

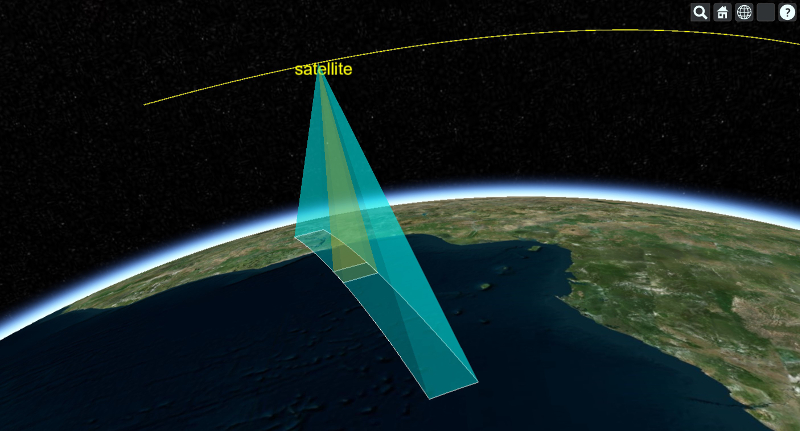

Let's begin with pushbroom sensors. We will model a pushbroom sensor as a series of small sensors (modules) that compose a wide swath that defines the field of regard. The series of small sensors are arranged in a line perpendicular to the direction of travel of the moving vehicle, which in our example will be a satellite in low Earth orbit.

We first define our satellite platform and then our sensor. We will keep our example to four sensor modules for the sake of simplicity. Each of the sensor modules are defined by a platform that is specified relative to the satellite platform. This means that the location and orientation are relative to the satellite's location and orientation (reference frame). In this frame the origin is the satellite propagation point and the axes are those of the satellite platform. The satellite platform axes have the X axis aligned with satellite's velocity vector and the Z axis pointing down towards Earth. The Y axis is perpendicular to the direction of travel and is a suitable axis for situating our sensors. We adjust the orientation of the sensors so that each small sensor covers a swath of ground in a sequential manner so that when combined they cover the total field of regard.

Platform satellite = new Platform("satellite"); satellite.setLocationPoint(propagator.createPoint()); // This orientation has the Z axis pointing towards the Earth and X axis pointing along the velocity vector. // See the documentation for AxesVehicleVelocityLocalHorizontal for more information. satellite.setOrientationAxes(new AxesVehicleVelocityLocalHorizontal(earth.getInertialFrame(), satellite.getLocationPoint())); double sensorAngularHalfWidth = Trig.degreesToRadians(0.5); // With the satellite constructed, we create the platforms that define our sensor. // This sensor is comprised of a series of modules that compose the sensor's field of regard. UnitQuaternion offset1 = new UnitQuaternion(new AngleAxisRotation(-3.0 * sensorAngularHalfWidth, UnitCartesian.getUnitX())); Platform sensorPlatform1 = new Platform("module1"); sensorPlatform1.setLocationPoint(satellite.getLocationPoint()); sensorPlatform1.setOrientationAxes(new AxesFixedOffset(satellite.getOrientationAxes(), offset1)); UnitQuaternion offset2 = new UnitQuaternion(new AngleAxisRotation(-1.0 * sensorAngularHalfWidth, UnitCartesian.getUnitX())); Platform sensorPlatform2 = new Platform("module2"); sensorPlatform2.setLocationPoint(satellite.getLocationPoint()); sensorPlatform2.setOrientationAxes(new AxesFixedOffset(satellite.getOrientationAxes(), offset2)); UnitQuaternion offset3 = new UnitQuaternion(new AngleAxisRotation(1.0 * sensorAngularHalfWidth, UnitCartesian.getUnitX())); Platform sensorPlatform3 = new Platform("module3"); sensorPlatform3.setLocationPoint(satellite.getLocationPoint()); sensorPlatform3.setOrientationAxes(new AxesFixedOffset(satellite.getOrientationAxes(), offset3)); UnitQuaternion offset4 = new UnitQuaternion(new AngleAxisRotation(3.0 * sensorAngularHalfWidth, UnitCartesian.getUnitX())); Platform sensorPlatform4 = new Platform("module4"); sensorPlatform4.setLocationPoint(satellite.getLocationPoint()); sensorPlatform4.setOrientationAxes(new AxesFixedOffset(satellite.getOrientationAxes(), offset4));

With all of the relevant platforms setup, we now proceed to add the sensor field of view extensions which in turn defines the sensor geometry.

// We model all of the constituent sensors to have the same geometry. RectangularPyramid rectangularPyramid = new RectangularPyramid(Trig.degreesToRadians(2.0), sensorAngularHalfWidth); FieldOfViewExtension fovExtension1 = new FieldOfViewExtension(rectangularPyramid); sensorPlatform1.getExtensions().add(fovExtension1); FieldOfViewExtension fovExtension2 = new FieldOfViewExtension(rectangularPyramid); sensorPlatform2.getExtensions().add(fovExtension2); FieldOfViewExtension fovExtension3 = new FieldOfViewExtension(rectangularPyramid); sensorPlatform3.getExtensions().add(fovExtension3); FieldOfViewExtension fovExtension4 = new FieldOfViewExtension(rectangularPyramid); sensorPlatform4.getExtensions().add(fovExtension4);

Now let's move on to modeling a whiskbroom sensor. In contrast to the pushbroom sensor where we have an array of fixed modules that compose the sensor, the whiskbroom sensor involves a moving element that sweeps out the field of regard. This motion can be unidirectional in nature (i.e. +Y to -Y each sweep), or sweep in a bidirectional manner (back and forth). We will model the former. In order to produce this in DME Component Libraries, what we must do is model the movement via a rotating sensor platform. Unfortunately using a single rotating sensor would leave a gap when sweeping the ground covering the field of regard. This is because as the single sensor platform rotates around the satellite it will point away from Earth after exiting the field of regard. To remedy this problem we must model a series of coaxial rotating sensors spaced apart such that when one rotating sensor clears the field of regard, the next sensor is located at the start of the field of regard and can scan the region.

The code for the satellite platform mirrors that of the pushbroom sensor example so we omit it. The field of view extensions for each of the rotating platforms are also setup in an identical manner to that of the pushbroom so we omit the code for those as well. Here we show the setup for the whiskbroom rotating sensor platforms.

// With the satellite constructed, we create the platforms that define our sensor. // In our model the sensor only sweeps in one direction. UnitQuaternion initialOffset1 = new UnitQuaternion(new AngleAxisRotation(0.0, UnitCartesian.getUnitX())); Platform sensorPlatform1 = new Platform("sensorElement1"); sensorPlatform1.setLocationPoint(satellite.getLocationPoint()); // All of the sensors are located at the propagation point. sensorPlatform1.setOrientationAxes(new AxesLinearRate(satellite.getOrientationAxes(), epoch, initialOffset1, UnitCartesian.getUnitX(), angularVelocity, 0.0)); UnitQuaternion initialOffset2 = new UnitQuaternion(new AngleAxisRotation(Constants.HalfPi, UnitCartesian.getUnitX())); Platform sensorPlatform2 = new Platform("sensorElement2"); sensorPlatform2.setLocationPoint(satellite.getLocationPoint()); sensorPlatform2.setOrientationAxes(new AxesLinearRate(satellite.getOrientationAxes(), epoch, initialOffset2, UnitCartesian.getUnitX(), angularVelocity, 0.0)); UnitQuaternion initialOffset3 = new UnitQuaternion(new AngleAxisRotation(Math.PI, UnitCartesian.getUnitX())); Platform sensorPlatform3 = new Platform("sensorElement3"); sensorPlatform3.setLocationPoint(satellite.getLocationPoint()); sensorPlatform3.setOrientationAxes(new AxesLinearRate(satellite.getOrientationAxes(), epoch, initialOffset3, UnitCartesian.getUnitX(), angularVelocity, 0.0)); UnitQuaternion initialOffset4 = new UnitQuaternion(new AngleAxisRotation(Constants.ThreeHalvesPi, UnitCartesian.getUnitX())); Platform sensorPlatform4 = new Platform("sensorElement4"); sensorPlatform4.setLocationPoint(satellite.getLocationPoint()); sensorPlatform4.setOrientationAxes(new AxesLinearRate(satellite.getOrientationAxes(), epoch, initialOffset4, UnitCartesian.getUnitX(), angularVelocity, 0.0));

Notice that the system of sensors modeling the whiskbroom sensor has each element rotating at the same angular velocity and are offset from one another by an angular width that corresponds to our desired scan geometry. See below for a visualization of this configuration.

How can I use a TLE to generate a state vector at a given time?

TLEs are propagated in DME Component Libraries using an Sgp4Propagator object. Create an Sgp4Propagator with your TLE (using a TwoLineElementSet object) and then get an evaluator from the propagator. Then, evaluate the propagator at your desired time to return a Motion1<Cartesian> object. Be sure to pass in the order parameter to the evaluate method indicating how many derivatives you'd like the evaluator to produce. The resulting Motion1<Cartesian> object now contains the Cartesian values at the desired time which can be used as a state vector.

How do I determine the time of closest approach (TCA) and the range at TCA for an object?

This code sample shows how to calculate the time and distance of closest approach between an Iridium satellite and a facility on the Earth.

Vector vector = new VectorApparentDisplacement(facility.getLocationPoint(), iridiumSatellite.getLocationPoint(), earth.getInertialFrame()); final VectorEvaluator evaluator = vector.getEvaluator(); JulianDateFunctionExplorer explorer = new JulianDateFunctionExplorer(); explorer.setFindAllExtremaPrecisely(true); JulianDateFunctionSampling sampling = new JulianDateFunctionSampling(); sampling.setMinimumStep(Duration.fromSeconds(1.0)); sampling.setMaximumStep(Duration.fromSeconds(60.0)); sampling.setDefaultStep(Duration.fromSeconds(30.0)); sampling.setTrendingStep(Duration.fromSeconds(1.0)); // tell the JulianDateFunctionExplorer how to sample IJulianDateFunctionSampler functionSampler = sampling.getFunctionSampler(); explorer.setSampleSuggestionCallback(JulianDateSampleSuggestionCallback.of(functionSampler::getNextSample)); // add the function to explore: the vector magnitude in this case. explorer.getFunctions().add(JulianDateSimpleFunction.of(date -> evaluator.evaluate(date).getMagnitude())); // add a method that processes the extrema when found explorer.addLocalExtremumFound(EventHandler.of((sender, e) -> { JulianDateFunctionExtremumFound finding = e.getFinding(); if (!finding.getIsEndpointExtremum() && finding.getExtremumType() == ExtremumType.MINIMUM) { resultSet.put(finding.getExtremumDate(), finding.getExtremumValue()); } })); // start exploring between the start and stop time explorer.explore(start, stop);

Note that you may want to limit your exploration start and stop times to those when you have line of sight access between the objects. This example uses no such constraint and will return the time and minimum distance between the objects for each orbit regardless of Earth obstruction.

More details about sampling and other types of functions that can be explored are in the Exploring Functions topic in the Programmer's Guide.

How do I convert from one reference frame to another?

Converting from one ReferenceFrame to another is best done using the GeometryTransformer class. See the Programmer's Guide topic on Reference Frames and Transformations for details.

How can I determine where a vector, fixed to a satellite's axis, points on the Earth?

Here's one way to do this:

// observe groundPointingVector in Earth's fixed frame VectorEvaluator pointingVectorEvaluator = GeometryTransformer.observeVector(groundPointingVector, earth.getFixedFrame().getAxes()); // observe the satellite's position in the Earth's fixed frame PointEvaluator satellitePositionEvaluator = GeometryTransformer.observePoint(satelliteLocation, earth.getFixedFrame()); Cartesian svPosition = satellitePositionEvaluator.evaluate(timeToEvaluate); Cartesian pointingVector = pointingVectorEvaluator.evaluate(timeToEvaluate); // use the Intersections method to determine where the vector intersects the Earth's ellipsoid at this timeToEvaluate double[] intersectResults = earth.getShape().intersections(svPosition, pointingVector.normalize()); // calculate the ground position where the groundPointingVector intersects the Earth // assuming intersectResults is not empty and that intersectResults[0] is the minimum value in the intersectResults array Cartesian surfacePosition = Cartesian.add(svPosition, pointingVector.multiply(intersectResults[0])); // convert the results to a latitude and longitude: Cartographic surfacePositionLatLong = earth.getShape().cartesianToCartographic(surfacePosition);

How can I transform an SGP4 propagated ephemeris into ECI or ECF?

One way to do this is outlined here:

PointEvaluator evaluator = GeometryTransformer.observePoint(point, earth.getInertialFrame()); ArrayList<Motion1<Cartesian>> inertialElements = new ArrayList<>(); JulianDate start = new GregorianDate(2012, 2, 5).toJulianDate(); for (int i = 0; i < 1441; i++) { JulianDate date = start.addSeconds(i * 60.0); inertialElements.add(evaluator.evaluate(date, 1)); }

By specifying a different ReferenceFrame, you can get the coordinates in any frame. In this case, we'll get answers back in the Earth's fixed frame:

evaluator = GeometryTransformer.observePoint(point, earth.getFixedFrame());

How do I calculate the number of orbital passes for a satellite?

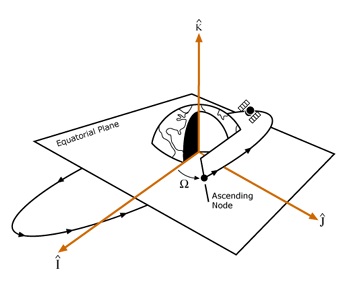

In order to count the number of orbits we must first define the event that indicates the start of an orbit pass. We call this event the orbit's pass break. One way a pass break can be defined is as the time when a satellite crosses a specified latitude boundary in either the ECI or ECF coordinate system. Using this definition we can also choose the direction of motion (ascending or descending) of the satellite at the specified latitude that defines the pass break. This information is all we need to count the orbits over a given time span.

Most satellite systems count orbits by specifying that a new pass begins when the satellite passes through the inertial equator at the ascending node. The diagram below demonstrates this scenario.

The following code sample shows how to count the number of passes a satellite has over a given time span. Begin by specifying the analysis time span and initializing the propagator. In this example the TwoBodyPropagator is used, but this code may be used with other propagators.

// Create the analysis start and end time JulianDate start = new GregorianDate(2020, 5, 27, 16, 0, 0.0).toJulianDate(); JulianDate end = start.addDays(5.0); // Initialize the propagator double semimajorAxis = 6700000.0; double eccentricity = 0.2; double inclination = Trig.degreesToRadians(28.5); double argumentOfPerigee = 0.0; double raan = 0.0; double trueAnomaly = 0.0; double gravitationalParameter = EarthGravitationalModel2008.GravitationalParameter; KeplerianElements elements = new KeplerianElements(semimajorAxis, eccentricity, inclination, argumentOfPerigee, raan, trueAnomaly, gravitationalParameter); TwoBodyPropagator propagator = new TwoBodyPropagator(start, earth.getInertialFrame(), elements); // Set the reference frame to our desired frame DateMotionCollection1<Cartesian> state = propagator.propagate(start, end, Duration.fromSeconds(30.0), 0, earth.getInertialFrame());

After propagating we count the number of orbits. Start by defining the latitude that marks a new orbit, which is 0 degrees in this example (crossing zero in Z in ECI). This example uses the inertial equator as the latitude boundary, but other latitudes can be specified as defined in the ECI frame. Then, at each time step of the ephemeris, if the satellite passes through the latitude boundary, increment the number of orbits.

// Count the number of orbits using the latitude boundary which is 0, which is crossing 0 in Z double planarBoundary = 0.0; int orbits = 0; for (int i = 0; i < state.getValues().size() - 1; ++i) { double currentStateZ = state.getValues().get(i).getZ(); double nextStateZ = state.getValues().get(i + 1).getZ(); // Satellite begins a new orbit if its latitude goes through the latitude boundary if (currentStateZ < planarBoundary && nextStateZ >= planarBoundary) { ++orbits; } }

A more advanced approach to counting the number of orbits is using a function explorer. This example uses a JulianDateFunctionExplorer to find the latitude crossing time more precisely. In our first example we only know that the orbit pass time falls between two ephemeris points (inclusive). With the function explorer we can get the time to within the desired precision.

Begin by creating a JulianDateFunctionExplorer and set the FindAllCrossingsPrecisely (get / set) property to true to find all times where the satellite passes through its pass break to our desired precision. Next we add the function to be evaluated: given a JulianDate, determine the latitude of the satellite using the propagator's evaluator and set the threshold value to be the latitude boundary. Configure the sampling method and subscribe to the event raised by the explorer each time the threshold is crossed. If the slope of the crossing is increasing, this means that the satellite is passing through the ascending node and the number of orbits can be incremented. We evaluate the explorer over the duration of the analysis and produce a list of precise crossing times in addition to the orbit pass count.

JulianDateFunctionExplorer explorer = new JulianDateFunctionExplorer(); explorer.setFindAllCrossingsPrecisely(true); MotionEvaluator1<Cartesian> evaluator = propagator.getEvaluator(); // add function to explorer -> latitude at a given state explorer.getFunctions().add(JulianDateSimpleFunction.of(date -> evaluator.evaluate(date).getZ()), planarBoundary); JulianDateFunctionSampling sampling = new JulianDateFunctionSampling(); sampling.setMinimumStep(Duration.fromSeconds(10.0)); sampling.setMaximumStep(Duration.fromHours(0.5)); sampling.setDefaultStep(Duration.fromSeconds(60.0)); IJulianDateFunctionSampler functionSampler = sampling.getFunctionSampler(); explorer.setSampleSuggestionCallback(JulianDateSampleSuggestionCallback.of(functionSampler::getNextSample)); final ArrayList<JulianDate> crossingDates = new ArrayList<>(); explorer.addThresholdCrossingFound(EventHandler.of((sender, e) -> { if (e.getFinding().getSlope() == FunctionSegmentSlope.INCREASING) { // positive, increasing through boundary crossingDates.add(e.getFinding().getCrossingDate()); } })); explorer.explore(start, end); orbits = crossingDates.size();

How do I calculate intervisibility between objects?

The term access is used throughout AGI products and refers to the intervisibility between objects, given any constraints on the objects. The Access topic in the Programmer's Guide contains an overview, and also covers access queries, which are the mechanism used to build complex questions about access in sophisticated scenarios.

How do I constrain intervisibility between objects?

AccessConstraints are used to limit access between objects. Without any constraints, there is always access between two objects. For example, if you want to determine when you can see a satellite from your facility on the Earth, you are assuming a constraint in that the Earth obstructs your view of the satellite during some portion of your analysis. In DME Component Libraries, you would add the CentralBodyObstructionConstraint to the access calculation to determine the viewing times from your facility.

When I attempt to get an evaluator for my access query, I get an exception saying 'Event times cannot be moved between participants in the access query because no links exist between them.' What does this mean?

Access queries require that a link path exist between the time observer specified in the AccessQuery and one of the participants in each constraint involved in the query. Link paths are deduced from constraints implementing ILinkConstraint. This means, for example, that if you have two constraints implementing ISingleObjectConstraint and applied to two different objects, and you include them in a single query, you must also have an ILinkConstraint which is applied to a link connecting the two objects.

Generally this is not a problem because most real-world situations have the necessary connectedness already. Access queries will also help avoid this problem by putting a query into disjunctive normal form before attempting to evaluate it. For more information about disjunctive normal form, see the next question. However, for unusual problems, you can use AlwaysSatisfiedLinkConstraint to create a path between two objects that will not affect the results of the computation.

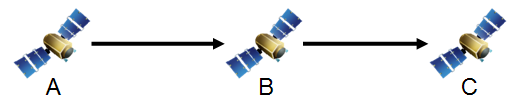

This behavior comes from the fact that access queries are designed to realistically model the sequence of events that occur during the course of an access event. Consider an example of three spacecraft, A, B, and C, where A transmits to B and B transmits to C.

Imagine that at time t the signal transmitted by A can be received by B. Because the speed of light is finite, B will not receive the signal until later, and how much later depends on how far apart A and B are at the time. So in considering the next leg of the pathway, it does not matter whether a signal from B can be received at C at time t because B will not have received the transmission from A by that time. Instead, whether or not C can receive a signal transmitted by B is considered at time t + Δt where Δt is the time it takes for the signal to travel from A to B.

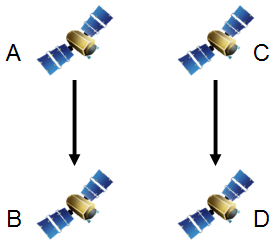

Now consider a different case where A transmits to B and C transmits to D.

If a query is constructed that requires that both of these links be available at the same time using an AND operator, this exception will be thrown when attempting to obtain the evaluator. The problem is that the access query system cannot determine the relationship between event times as observed by the different participants. If satellite A observes the start of access to occur at time t, satellite B will observe it to occur at time t + Δt. A similar statement is true of the end of access as well as the access events for the link between C and D. However, we cannot unambiguously do an interval intersection (AND) between the two access results. Do we intersect the times when A has access to B, as observed by A, with the times when C has access to D, as observed by D? Or do we use the times as observed by B and C? Maybe A and C? In this particular problem there are four different ways of doing the computation and the access query system has no way of determining which is appropriate for your problem.

Instead of taking a guess (and risking being wrong), the access query system throws this exception and requires you to explicitly tell it the relationship between these two apparently disconnected access problems. You can do that by adding an AlwaysSatisfiedLinkConstraint between the two participants through which the times should move. For example, if you create a LinkInstantaneous between A and D and add an AlwaysSatisfiedLinkConstraint using the link to the query, the access times of the first problem, as observed by A, will be intersected with the access times of the second problem, as observed by D.

How do access queries account for multi-hop link delay?

When getEvaluator is called on a query, the query first rewrites itself into an equivalent query in disjunctive normal form (DNF). DNF is a special form of a boolean expression where no conjunction (AND) has a sub-query which is a disjunction (OR). Rewriting a query in DNF has the effect of pushing as many constraints as possible down into AND queries. For example, the query expression A & B & (C | D) (where A, B, C, and D are constraints) will be rewritten in DNF as (A & B & C) | (A & B & D).

Access queries actually use a slightly relaxed form of DNF which allows queries such as AccessQueryAtLeastN, AccessQueryAtMostN, and AccessQueryExactlyN to exist in the rewritten expression. In true DNF, these queries would be rewritten in terms of ANDs, ORs, and NOTs. However, doing so tends to explode the size of the query unacceptably. Instead, these queries are treated in much the same way as an OR query. To learn how your query is being treated, call the toDisjunctiveNormalForm method on it and examine the return value. Queries have an overloaded toString method that may help to understand the rewritten form at a glance.

Once the query is in DNF, all of the accounting for multi-hop link delay occurs in AccessQueryAnd. Other queries, such as AccessQueryOr, AccessQueryNot, AccessQueryAtLeastN, AccessQueryAtMostN, and AccessQueryExactlyN, simply pass the time observer requested by the user in the AccessQuery to their sub-queries. It is the job of AccessQueryAnd to accept times expressed on the time observer, move them to the correct participant to evaluate each constraint, and move the resulting intervals back to the time observer.

AccessQueryAnd begins this process by building a LinkGraph from the ConstrainedLink (get / set) from each link constraint in the query. Then, for each constraint, the shortest path through the graph is computed from the time observer to the observer required by the constraint. Intervals are expressed on different participants by adding to the start and end times of the interval the delay along each link in the path at those times. Times can be moved in either direction. For example, given a time of reception, the time of transmission can be deduced. The directionality also does not have to be consistent through a path. If A and B both transmit to C, times can still be moved from A to B. The time of reception at C will be deduced from the time of transmission at A, and then a time of transmission from B will be deduced that will have C receiving that transmission at the same time it receives the transmission from A. Finally, after the intervals of satisfaction for the constraint have been computed, the intervals are moved back to the time observer along the same path.

If a path does not exist, an exception is thrown. See the question above for more information about the exception and how to work around it while maintaining realistic modeling of the problem.

How do I create an access query to model a Sun lighting constraint?

Let's say that you have a target that you want to observe with an optical device which can only track the target when the target is in direct sunlight. Since there are three objects involved (the observer, the target, and the Sun) it is not possible to use an AccessComputation to compute access. However, it is easy to do using access queries. Here is some code that shows how to do this:

LinkSpeedOfLight lineOfSight = new LinkSpeedOfLight(target, observingSatellite, earth.getInertialFrame()); SunCentralBody sun = CentralBodiesFacet.getFromContext().getSun(); LinkSpeedOfLight sunlight = new LinkSpeedOfLight(sun, target, earth.getInertialFrame()); CentralBodyObstructionConstraint lineOfSightConstraint = new CentralBodyObstructionConstraint(lineOfSight, earth); CentralBodyObstructionConstraint sunlightConstraint = new CentralBodyObstructionConstraint(sunlight, earth); // The observing satellite can only see the target when it is not // obstructed by the Earth and directly illuminated by the Sun AccessQuery accessQuery = AccessQuery.and(lineOfSightConstraint, sunlightConstraint); AccessEvaluator accessEvaluator = accessQuery.getEvaluator(observingSatellite); AccessQueryResult accessResult = accessEvaluator.evaluate(startTime, stopTime);

By creating two links representing the line of sight between the objects, it is possible to set up an arbitrarily complex problem of intervisibility. For instance, it would be just as easy to constrain a case where you have multiple tracking stations which all need to track the target at the same time OR multiple targets which all need to be visible. All you need to do is set up all the constraints and use boolean operators to create the corresponding AccessQuery.

A more advanced example of a Sun lighting constraint uses ScalarOccultationDualCone to set specific allowable lighting fractions applied as a ScalarConstraint in the access query. Here is some code that shows how to do this:

LinkSpeedOfLight lineOfSight = new LinkSpeedOfLight(target, observingSatellite, earth.getInertialFrame()); SunCentralBody sun = CentralBodiesFacet.getFromContext().getSun(); ScalarOccultationDualCone occultation = new ScalarOccultationDualCone(sun, target.getLocationPoint(), earth); // The constraint is satisfied if the target satellite is between fully lit (0.0 occultation) and half lit (0.5 occultation) AccessConstraint sunlightConstraint = new ScalarConstraint(target, occultation, 0.0, 0.5); // The constraint is satisfied if the observing satellite can see the target satellite CentralBodyObstructionConstraint lineOfSightConstraint = new CentralBodyObstructionConstraint(lineOfSight, earth); // The observing satellite can only see the target when it is not // obstructed by the Earth and directly illuminated by the Sun AccessQuery accessQuery = AccessQuery.and(lineOfSightConstraint, sunlightConstraint); AccessEvaluator accessEvaluator = accessQuery.getEvaluator(observingSatellite); AccessQueryResult accessResult = accessEvaluator.evaluate(startTime, stopTime);

In what order are sub-queries evaluated?

When a composite access query like AccessQueryAnd is evaluated, it does not necessarily evaluate its sub-queries in the order in which they're specified. Instead, it attempts to evaluate them in the order that will result in the best performance; specifically, the fastest or cheapest sub-query is evaluated first. This way, subsequent, more expensive sub-queries will likely only need to be evaluated over a smaller interval, significantly improving overall performance. For example, in an AccessQueryAnd, only intervals for which all earlier sub-queries were satisfied need to be evaluated for a subsequent sub-query, because a single sub-query without access for an interval marks that interval as "no access" regardless of the status of the other sub-queries. Other composite queries improve performance using similar rules.

Access queries report their complexity or cost via the getEvaluationOrder method. A higher evaluation order means a more expensive query that should be evaluated later in the process. The EvaluationOrder (get / set) is a user-settable quantity on AccessConstraint, but it is usually not necessary to set it manually. All access constraints in DME Component Libraries have a reasonable default evaluation order based on an estimate of their complexity. For composite queries, the evaluation order is estimated based on the evaluation orders of their sub-queries.

Consider the following access query:

AccessQuery verboseQuery = new AccessQueryAnd(targetInView, facilitySeesSatellite, facilitySeesAircraft, new AccessQueryOr(aircraftAltitudeLessThan1000, aircraftAltitudeGreaterThan5000));

Consider that in this example, targetInView has an evaluation order of 40, facilitySeesSatellite and facilitySeesAircraft have an evaluation order of 20 each, and aircraftAltitudeLessThan1000 and aircraftAltitudeGreaterThan5000 have an evaluation order of 18 each. The nested AccessQueryOr estimates its complexity by adding together the evaluation orders of its sub-queries, and returns 36 as its evaluation order. As a result, the outer AccessQueryAnd evaluates facilitySeesSatellite and facilitySeesAircraft first (evaluation order 20), followed by the inner AccessQueryOr (evaluation order 36), and finally targetInView (evaluation order 40). After each constraint is calculated the subsequent interval is limited by the previous constraint's interval. Doing the computations in this order ultimately maximizes the performance since time is not wasted calculating expensive constraints over irrelevant intervals.

Can I change the definition of my objects on the fly?

Definitional objects must be evaluated using the evaluator pattern described in the Programmer's Guide. Once you have an evaluator for your object, the evaluator will always represent the state of your object when that evaluator was created. If you change the definition of an object after an evaluator is created, the evaluator will not reflect those changes. You must get a new evaluator to see the effect of changes made to your object. The rule of thumb is get an evaluator when the definitional object changes and then store it for future evaluations.

When is it beneficial to create multiple evaluators in the same EvaluatorGroup?

An EvaluatorGroup will generally improve the performance of your evaluators by caching results at the time passed into an evaluate method and returning that cached value when it gets called again at that time. For example, you may have one evaluator that computes the elevation of a satellite relative to a ground station, and another one that computes the range between that satellite and station. Without EvaluatorGroup (or when using different groups), when you evaluate one of those evaluators and then the other at the same evaluation time, the position of the satellite, ground station, various reference frame transformations etc. would all be calculated twice; once for each evaluator. If instead you used the same EvaluatorGroup when you get both evaluators, all of the results of each of the sub-evaluations would be cached after the first evaluate call and those cached values will be used in the second. Avoiding those redundant calculations will improve performance.

Note that if you are only using one evaluator, then there is no need to explicitly deal with an EvaluatorGroup yourself. A group will be created automatically internally.

In cases where you are obtaining multiple evaluators in the same group, you should call the optimizeEvaluators method on the group after you have obtained all evaluators you plan to use. This method will internally replace evaluators with caching wrappers. You should then call the updateReference method to obtain a caching wrapper for your top-evaluators.

After changing properties of definitional objects, do not reuse an existing EvaluatorGroup to obtain new evaluators. Create a new group.

See the Evaluators And Evaluator Groups topic in the Programmer's Guide for more information about evaluator groups.

How can I get detailed signal propagation model losses?

There are several ways to go about getting this information. At the lowest level, ScalarPropagationLoss has an optional property, SelectedModels (get / set), that enables you to compute the loss for specific models. This property is null by default, indicating that when the signal propagation loss is calculated, the entire set of signal propagation models will be used (that is, the total loss will be computed). If this property contains a single model, such as FreeSpacePathLossModel, then the signal propagation loss will only be computed over that model. In addition to this single model computation, a subset of the available models may be chosen by setting the desired start and stop signal propagation models that correspond to any subset of the signal propagation model chain. See the following demonstration code for an example:

// PropagationModels.get(0) on the wireless link is free space loss model in this case, // because we are using the default propagation models included with the wireless link. SignalPropagationModel freeSpaceLossModel = wirelessLinkExtension.getPropagationModels().get(0); ScalarPropagationLoss freeSpaceLoss = new ScalarPropagationLoss(link, graph, intendedSignal, freeSpaceLossModel); ScalarEvaluator freeSpaceLossEvaluator = freeSpaceLoss.getEvaluator(); double freeSpaceLossValue = freeSpaceLossEvaluator.evaluate(evaluationTime);

If you are using the higher level CommunicationSystem, the detailed signal losses can be computed as part of the link budget. The "Detailed" methods in CommunicationSystem will create propagation loss scalars for each signal propagation model. The computed LinkBudget contains propagation losses from each signal propagation model. The following code illustrates the higher level construct:

Evaluator<LinkBudget> linkBudgetEvaluator = communicationSystem.getDetailedLinkBudgetEvaluator(link, intended, group); group.optimizeEvaluators(); LinkBudget linkBudget = linkBudgetEvaluator.evaluate(evaluationTime); // We can get the propagation loss per model from the evaluated budget: for (LinkBudget.SignalPropagationModelLoss lossPerModel : linkBudget.getPropagationLossPerModel()) { // Each item contains the type of signal propagation model, and the loss from that model. System.out.format("Propagation model %s, loss (linear scale) %g%n", lossPerModel.getSignalPropagationModelType().getSimpleName(), lossPerModel.getPropagationLoss()); }

How do I compute the area of a set of SurfaceRegionsCoverageGrid? What if they overlap?

First, create an SurfaceRegionsCoverageGrid object from your array or list of EllipsoidSurfaceRegions. You'll need to specify the resolution of the grid as well. The smaller the resolution, the better the accuracy for the computed area.

Next, generate the grid by calling generateGridPoints on the SurfaceRegionsCoverageGrid object. This will return a list of GridPoints. Finally, iterate through the list of GridPoints and sum the Weight (get) property of each ellipsoid grid point. The weight property represents the amount of area that each local grid point contains. The sum will represent the total area your EllipsoidSurfaceRegions represent. A benefit to this approach is that if you are just computing the area of your regions, you do not need to calculate coverage at all.

When you generate the grid, if any of your EllipsoidSurfaceRegions overlap, the overlaps will be taken into account and removed. The list of GridPoints you get back will contain any overlapping areas just once.

Where can I get terrain and imagery for use in DME Component Libraries?

A DME Component Libraries license includes use of an AGI-hosted premium STK Terrain Server, which provides global terrain data over the internet. See StkTerrainServer for more information.

Several other external sites for terrain and imagery data are listed on the AGI website: External Terrain and Imagery Sources.

My calculations with terrain are taking a long time, how can I speed these up?

There are two ways to calculate access using terrain - using a TerrainLineOfSightConstraint or using an AzimuthElevationMask with AzimuthElevationMaskConstraint. You need to look at your code and decide which method is right for your situation. If both objects using the constraint are moving, the TerrainLineOfSightConstraint is your only choice. If one of the objects is stationary, you can pre-compute an AzimuthElevationMask for that object. Whether you should use an AzimuthElevationMask mostly comes down to a size/speed/accuracy tradeoff, but usually the AzimuthElevationMask is worthwhile. There are other steps to take as well, such as preloading your terrain in memory. This will help if you notice the first calculations with terrain taking longer and following calculations shorter. Also, include a CentralBodyObstructionConstraint or ElevationAngleConstraint as a faster first-pass constraint to avoid terrain calculations that are being done when the objects are not in view. Since DME Component Libraries uses all logical processors on a single machine, if you run on a machine with more processors, your performance will improve.

The Terrain topic in the Programmer's Guide contains more information about creating masks and caching terrain data.